Azure CLI & PowerShell are your friends

Using Terraform together with Azure, Azure Pipelines & GitHub Actions

Update: to use Terraform with Azure Pipelines, you can use this script to populate ARM_* azuread/azurerm environment variables from within the AzureCLI task with addSpnToEnvironment: true.

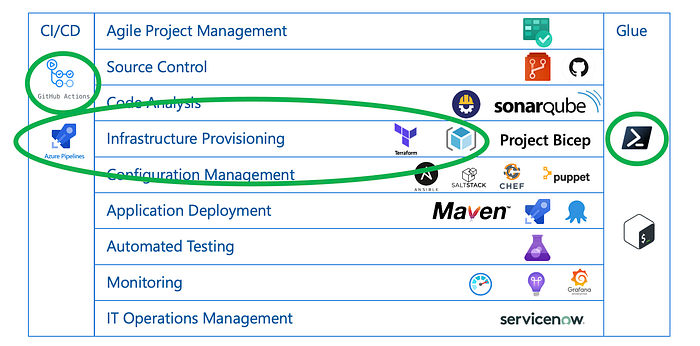

Terraform (Infrastructure Provisioning), Azure Pipelines (CI/CD) and GitHub Actions (CI) are all hugely popular. So it is no surprise they are used together a lot. While there are a few Azure DevOps extensions and GitHub actions I used, I find myself mostly relying on script for the integration.

In this article, I share the methods I use to integrate Terraform with an Azure Pipeline or GitHub Action workflow, how I deal with scenarios where a Terraform resource provider doesn’t support a certain a feature, and some other tricks.

As I am an Azure guy, I will focus on creating Azure resources with Terraform. I will skip the Terraform basics, here is great (video) learning content as an introduction. Other technology choices are PowerShell as scripting shell of choice, and YAML Azure Pipelines i.e. ignore classic (ClickOps) pipelines. The below patterns translate well to other hyperscalers and tools though.

The topics I’ll cover below are:

- Azure Provider Configuration

- Provider Versioning

- Azure Backend Configuration

- Terraform Versioning

- Input Variables

- Feature fallback to Azure CLI

- Output Variables

- Teardown

- End-to-end examples

For reference, the tools used and where they for into the DevOps tools taxonomy:

For a complete reference model of DevOps tools, please refer to the Periodic Table:

Azure Provider Configuration

I never use separate credentials for Terraform, period. Using Terraform interactively, there is support to inherit Azure CLI user credentials, as described here. There are basically 2 flavors of script to keep sure subscriptions are aligned when using either Azure CLI or Terraform:

or

Now, this is interactively. So how can you share credentials in automation? Terraform only supports using an Azure CLI session when authenticated as a user, not a service principal. There is another approach for Azure Pipelines, but you will need to have an Azure Service Connection configured for your pipeline. If you haven’t done so, create one as described here. Use the Azure CLI task, and configure it to expose the Service Principal credentials as environment variables (addSpnToEnvironment: true). This allows you to capture the Service Principal credentials and configure Terraform using the same:

Note the use of the null coalescing operator ??= . Environment variables will only be set when not defined yet, and not overwritten if they have been defined.

In GitHub Actions, there is (currently) no native integration with Azure in the sense that a pre-existing connection can be re-used. Instead, the Azure login action requires a secret to be configured as described here. This will store a json value like the below as secret:

Once stored, the same secret can be used for Terraform:

As other Terraform providers either don’t need configuration (e.g. Certificates, Cloud-init, Random generator), or are configured dynamically (e.g. DNS, Helm, Kubernetes) based on the output of resources created by another provider (e.g. Azure, AAD), this covers 99% of my Terraform automation authentication set up.

Provider Versioning

This is actually standard Terraform, but I’ll cover it here: Provider dependencies are (since version 0.14) captured in the .terraform.lock.hcl Dependency Lock File. Hashicorp recommends to include this file in source control so it is available in automation.

Azure Backend Configuration

Terraform can maintain its state in a backend, instead of locally on disk. I use the azurerm backend, which uses a storage account and requires additional configuration. To be able to use the backend, without checking any sensitive information into Git, I use partial configuration. That is, I have a backend.tf template that is partially populated and then pass in the rest as arguments when initializing Terraform:

To make sure Terraform has access to the storage account used for the backend, define either ARM_SAS_TOKEN (I recommend a container level SAS token), ARM_ACCESS_KEY (storage account key, not recommended), or grant the service principal running Terraform the Storage Blob Data Contributor role.

One thing that a Terraform backend enables, is the use of different workspaces e.g. different configurations for dev, test, etc. If you are using a Terraform backend, it is a good idea to pin the workspace to be used via the TF_WORKSPACE variable. This ensures Terraform won’t touch resources created in other workspaces.

Terraform Versioning

To be consistent in the version of Terraform used, I use a .terraform-version file with tfenv locally (works on Linux & macOS only). To use the version stored in that file in Azure Pipelines:

Or in GitHub Actions, where the similar approach is:

Input variables

Input variables require special treatment in Azure Pipelines. Environment variables are always converted to uppercase, where input variables (as per naming convention) are lowercase. Assume there is an input variable foo:

To override a variable’s value, an Azure Pipeline variable TF_VAR_foo="bar" is defined, but it will be converted to an environment variable TF_VAR_FOO="bar" and therefore Terraform won’t see it and will use “notbar” as value for var.foo.

To get around this, some voodoo with environment variables is required. The below code covers both Linux as Windows (a Linux only version could be shorter):

TF_VAR_FOO will be converted back to TF_VAR_foo, and Terraform will use “bar” as value for var.foo.

As GitHub Actions do not modify case of environment variables (hurray), there is nothing to fix there.

While we’re discussing environment variables, make sure to also set TF_IN_AUTOMATION=true and TF_INPUT=0. This will prevent Terraform to stop for user input during automation.

Feature fallback to Azure CLI

As anybody working with Terraform will know, providers for cloud services do typically not implement 100% of the underlying service. Either a given resource is entirely unsupported, or a certain feature of an otherwise supported resource cannot be configured through the provider. In fact this (resource API coverage) is the main downside to using Terraform.

Well, what if you can have the best of both worlds? The Terraform azurerm provider has the azurerm_resource_group_template_deployment resource as a catch all approach, but personally I prefer another technique: use the Terraform local-exec provisioner with Azure CLI:

In this specific case, the azurerm_application_insights resource does not yet support integration with a Log Analytics workspace (in azurerm provider version 2.51). Azure CLI is invoked after the resource is created to perform the part of the provisioning that Terraform can’t deliver. Note no shell is specified so this works regardless whether Bash or PowerShell are used. As we set up Terraform to use the same security credentials as Azure CLI uses, subscriptions line up, and the Azure CLI is idempotent, this just works.

Output Variables

Infrastructure is only part of a total solution that also includes applications. After Terraform provisioning has completed, data needs to be loaded and applications deployed on the resources that have been created. But in order to be able to do that, we need to know what the actual resource id’s or names are of what was created. Those are typically available as Terraform output variables. This below snippet exports those variables as Azure Pipeline task output:

GitHub Actions has multiple models to pass on data between steps. One as step output, the other as environment variables. This snippet implements both:

Teardown

If you’re running Terraform in CI to test provisioning, you’re also destroying infrastructure once verified. If this last step fails, infrastructure and associated costs may pile up especially when using a nightly build. So to make sure infrastructure is always destroyed even in the case Terraform fails to do so, I have the following approach. First, I make sure to define metadata so to identify what resources got created:

With the above tags set up, below task is able to perform the teardown in Azure Pipelines:

The same for GitHub Actions is:

End-to-end examples

The above examples each have been trimmed to address the described problem only. They can be combined in end-to-end scenario’s, that I won’t include here but will simply link to:

Example Azure Pipeline (as YAML template): azure-vdc

Example Azure Pipeline (as YAML template): synapse-performance

Example GitHub Action workflow: azure-minecraft-docker